Bioethics Forum Essay

Diving Deeper into Amazon Alexa’s HIPAA Compliance

Earlier this year, consumer technology company Amazon made waves in health care when it announced that its Alexa Skills Kit, a suite of tools for building voice programs, would be compliant with HIPAA, the Health Insurance Portability and Accountability Act, which protects the privacy and security of certain health information. Using the Alexa Skills Kit, companies could build voice experiences for Amazon Echo devices that communicate personal health information with patients.

Amazon initially limited access to its HIPAA-updated voice platform to six health care companies, ranging from pharmacy benefit managers to hospitals. However, Amazon plans to expand access and has identified health care as a top focus area. Given the recent announcement of new Alexa-enabled wearables (earbuds, glasses, a biometric ring)—likely indicators of upcoming personal health applications—let’s dive deeper into Alexa’s HIPAA compliance and its implications for the health care industry.

When the Amazon Echo was released in November 2014, health care was immediately targeted as an opportunity area for innovation. However, grand plans for Alexa’s health applications fell through as developers realized that the platform could not legally store or transmit public health information. This information could only be accessed and communicated by HIPAA-covered entities, including health plans, health care providers, and health care clearinghouses.

For years, HIPAA prevented Amazon Alexa from approaching any serious medical undertakings. Alexa partnered with groups such as the Mayo Clinic to develop skills that offered basic allergy reports or first-aid information—information too general to be covered under HIPAA. When trialed in hospital settings, Alexa connected patients to resources (e.g., offered directions, summoned a nurse) but could not directly interact with patient information.

In order for Alexa to achieve HIPAA compliance, it needed to complete two tasks. First, because Amazon did not qualify as a covered entity under HIPAA, it was required to enter a business associate agreement with one. In a business associate agreement, Amazon promises to abide by the same regulations as covered entities and only provide public health information to covered entities for their explicit use. Secondly, Alexa needed to update its software to a standard that convinced covered entities it could transmit private patient information safely and responsibly. (I discuss the limitations of this goal later in this article.)

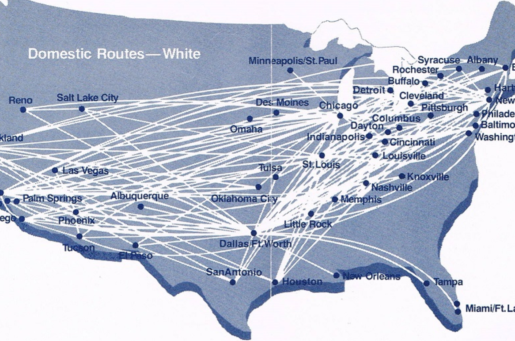

In April 2019, Alexa announced HIPAA compliance and introduced six business associate agreements. Alexa’s partners included Express Scripts, Cigna Health Today, Boston Children’s Hospital, Providence St. Joseph Health, Atrium Health, and Livongo, and their skills involved tracking prescription deliveries, diarizing personal health goals, providing patient updates to care teams, locating urgent care centers, scheduling appointments, and querying previous blood sugar readings.

At the time of this article’s publication, Amazon is still the only leading tech company with a HIPAA-compliant voice platform; Google Assistant, Apple Siri, Samsung Bixby, and Microsoft Cortana have yet to follow suit.

Despite its achievement, Alexa is still relatively limited in its patient interactions; the confined scope of skills announced and their medical inconsequence suggest that covered entities don’t trust Alexa as a substantial tool just yet. Each of these skills could be accomplished through a computer screen, an indication that voice isn’t yet being treated as an independent platform with its own value-adds.

In order for voice to achieve its full potential of health care offerings (e.g., conversational diagnosis, contextual care plans, detection of in-home emergencies) there are engineering considerations that must be addressed. Foremost are questions of sound and environment: how do voice platforms transmit sensitive information privately (i.e., without broadcasting to a room full of people) and how do they accurately decipher patient data from background noise? Challenges are also present involving patients’ different speech patterns and abilities, as well as voice’s role in identifying and escalating emergencies.

There’s also the question of the business associate agreements. They were traditionally intended for behind-the-scenes health care processes: a billing accountant seeing a patient’s procedure history, for example. “This is kind of turning the notion of HIPAA privacy on its head. It’s data coming in through the business associate,” says Drexel law professor Robert Field, speaking on the podcast Knowledge@Wharton. It’s expected that both the HHS and companies like Amazon will push for the reevaluation of voice’s role within the HIPAA chain.

What comes next for Amazon and Alexa’s health capabilities? Other announcements at the company indicate that Amazon’s health care ambitions extend beyond business associate agreements. The company’s 2018 purchase of PillPack online pharmacy, its extension of Prime discounts to Medicaid recipients, and the formation of Haven Healthcare (a joint effort with Berkshire Hathaway and J.P. Morgan) suggest that Amazon is preparing to establish itself as a large comprehensive player in the market. It is yet to be announced whether Amazon/Haven will negotiate directly with health providers, assume (or erase) the role of a pharmacy benefits manager, or enter the market in a new role altogether. These actions may put it in competition with the same companies it has invited into Alexa HIPAA partnerships.

As AI evolves, we must also consider Alexa’s future role as a diagnostic and prescribing agent. Whether partnering with care providers or replacing them directly, Alexa’s interpretation of patient data will likely extend beyond the scope of the business associate agreement. We have yet to understand how we would regulate technology as a provider; the closest analogy we have today may be telemedicine, where a provider communicates with a patient through a device. However, this analogy breaks down if and when the device becomes the doctor.

Lastly, Alexa’s various roles in health care stand to confuse (or potentially exploit) users. Normally, patients have a clear understanding of which entities they’re speaking to and when: they use signals, such as a website logo or direct mail letterhead, to distinguish their doctor’s communications from their pharmacy’s or their insurer’s. These signposts are muddled on voice. For example, if a user receives an allergy report from a pharmaceutical company, requests a prescription from their doctor, and discusses a generic with their pharmacy all through Alexa, we could imagine a situation where a patient forgets who they’re talking to and in which context, creating a ripe situation for conflicts of interest.

Adriana Krasniansky is a graduate student at the Harvard Divinity School studying the ethical implications of new technologies in health care, and her research focuses on personal and societal relationships to devices and algorithms. A version of this essay originally appeared in Bill of Health, the blog of the Petrie-Flom Center at Harvard Law School.

45% of The Hastings Center’s work is supported by individual donors like you. Support our work.