Bioethics Forum Essay

Gene Drive Technology: Lessons of the Atomic Bomb

At the age of 15, in August 1945, I heard the radio announcement of the dropping of the atom bomb on Hiroshima. It left an indelible unsettled mark on my memory, never quite matched since. Years later, in 1986, I read Richard Rhodes’s superb book, The Making of The Atom Bomb. I then learned in full just why that bomb meant more to me than I had earlier understood. It not only ended a war, surely a human benefit, but no less left us with a monster threat to human life that will never go away.

Recently, in the context of a new Hastings Center initiative on the ethics of emerging technologies, I decided to take another look at the Rhodes book. After doing so I concluded that the research, the building, the using of that bomb–and then the post-war effort to regulate its proliferation–was surely the first major effort in history to look at an emerging technology in all its actual and potential implications. It offers a timeless paradigm for similar efforts.

Creating the Bomb. The knowledge to build the bomb first appeared on the horizon with the discovery of radioactivity at the end of the nineteenth century and then of nuclear fission in Nazi Germany in 1938. Rhodes calls it naïve to think that physicists could have come together to keep the fission knowledge a secret. Not only would that have been impossible, but only one scientist, Leo Szilard, saw the destructive possibilities of nuclear fission.

As the research moved forward and knowledge developed to make the bomb, there was no turning back; the knowledge was out there. As Rhodes put it, “to stop it you would have to stop physics.” He then added, “Nor can you have only benevolent knowledge; the scientific method does not filter for benevolence.” He cited Robert Oppenheimer saying that “the deep things in science are not found because they are useful; they are found because it was possible to find them.” Even so, as the research went forward, there was some internal resistance by a few scientists and much tortured ambivalence among others. But World War II was under way, the bomb was irresistible as a possible way to win it, and the Manhattan Project was secretly created by President Roosevelt to make the bomb.

The demonstration and use of the bomb. Once the ingredients for the bomb were put together the bomb had to be tested. Some scientists thought it possible that its explosion could ignite the atmosphere or even destroy the world, but that was a minority view, and not enough to stop the test. Akin to what those of us in health care might call a clinical trial, once the bomb was a tested reality at Almagordo, the next issue was how to demonstrate and use it. What would it take to persuade the Japanese to surrender?

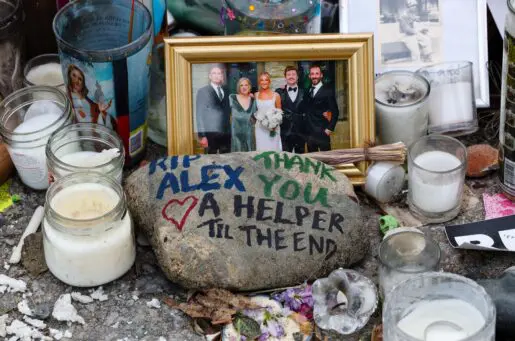

Some argued that a demonstration of the bomb away from a city would be sufficient to get the message across, while others–with a possible toll of 1 million dead American soldiers if Japan had to be invaded–opted for destroying cities as the most effective demonstration. President Truman apparently did not do much moral wrestling with the decision. Hiroshima and Nagasaki were targeted. And the science that made possible the atom bomb turned out also to lead to an irresistible way to create an even more destructive device, the hydrogen bomb, invented not much later. In the years to come, while some bombs became bigger, smaller and more mobile bombs were also created. If use of the big ones seemed to guarantee MAD–mutual assured destruction–maybe the little ones would allow safer nuclear wars. Surely a consoling thought?

Regulating nuclear weapons. Nuclear proliferation soon flowed forth. Soviet espionage provided the secret information to build its own bombs. A number of other countries soon followed. The International Treaty on the Non-proliferation of Nuclear Weapons was developed, but it was signed by only a few countries. Outside of that treaty, Israel is known to have nuclear weapons but has never openly admitted it, while both Iran and North Korea have secretly carried out research to develop them. Just recently there has been news of refined American and Russian nuclear missiles and a slowdown in reducing America’s cache of weapons, part of an agreement with Russia.

Why did I call the making of the atomic bomb the great paradigm? Here are some key ingredients. Once research has begun on a new and promising technology, the knowledge gained cannot be eliminated even if the technology turns out to be dangerous or it simply fails as science. Someone later can use and augment that knowledge to do a better job. In any case, there is no telling when new research begins just what else of importance may turn up on the way (think of penicillin). One can talk all one wants about the necessity of cost-benefit studies of research with a mix of possible harms and benefits, but if it can be shown that many lives will be saved despite the harms, there will be pressure for the research to go forward.

That pressure may have to be resisted. Lives may be saved by other, less dangerous, kinds of research; and it may well have been that a demonstration of the atomic bomb outside of Japanese cities would have worked to end the war. In any case, even if good regulations are put in place to reduce the dangers, or moratoriums are established, there are always likely rogues ready to make use of the available knowledge. As Oppenheimer once said of scientists, “When you see something that is technically sweet, you go ahead and do it and you argue about it after you have had your technical success.”

I had no sooner drafted this piece than I read about the important study carried out by the National Academies of Sciences, Engineering and Medicine on gene drives. That study (of which my Hastings colleague Gregory Kaebnick was a part) aims to offer a roadmap on the regulation and oversight of a new genetic technology that may make it possible to transform and even to eliminate entire populations of organisms in the wild. One can see in this report the full atomic bomb paradigm in play, beginning with whether the research should go on at all, to how it might be organized to take into account complex scientific and ethical considerations, and then how to regulate it. The report makes clear that it could be a long and contentious struggle. While there may well be some rogue scientists who will strike out on their own, and perhaps no way to stop them, the issue is now in the first stage. But for that stage the report effectively lays the groundwork for a meaningful public and scientific discussion and debate.

Daniel Callahan, cofounder and President Emeritus of The Hastings Center, is the author most recently of The Five Horsemen of the Modern World: Climate, Food, Water, Disease, and Obesity (Columbia University Press, 2016)

Two significant differences between nuclear and modern genetic technology are ease and mastery of use and ubiquity. Nations alone have the scarce resources and expertise to build nukes, and the costs of doing so are massive. Today’s genetic engineering tools are already largely democratized, and the costs of acquisition and deployment of emerged and still emerging tools are low and dropping further and fast. Thus societal techniques for control and governance of the genetics revolution unfortunately will not be based on easy replications of nuclear containment.