Bioethics Forum Essay

GM Mosquitoes: Risks and Emotions

For several years, a British company called Oxitec has been proposing a strategy for controlling a species of mosquito, Aedes aegypti, that humans have accidentally carried from Africa to other parts of the globe, thereby also spreading a risk of dengue fever and other diseases for which A. aegypti is a vector. One of the places where A. aegypti has taken up residence is Key West, Florida, and in 2009 and 2010 it contributed to an outbreak of dengue there. A. aegypti has developed resistance to the insecticides that have been the first line of defense against it, and local authorities in Key West now want to try Oxitec’s approach: releasing millions of male A. aegypti that have been genetically modified so that when they mate, their offspring will receive a gene that prevents them from developing into adults unless they are bathed in the antibiotic tetracycline. If enough males are released that a high percentage of the females living in Key West mate with them, then the population will crash. According to Oxitec’s research, a population reduction of as much as 90 percent is possible.

Polls in Florida suggest that a modest majority of residents think the plan is acceptable, but at recent hearings some have been vehemently opposed. Some of the concerns seem to be about health risks: “We don’t want to be guinea pigs,” said one resident. Other concerns are about the possibility of unknown environmental risks — that if the mosquitoes disappear, something worse might take their ecological place. Perhaps organisms that consume mosquitoes might suffer. Perhaps there is an unspoken worry, too, that the altered mosquitoes might not disappear at all, but might somehow survive the fault engineered in their stars and emerge better — worse — than ever.

As I understand the plan, the theory behind the engineered trait suggests that it should be safe: there are no known mechanisms by which it could pose either health risks or environmental harms. Also, it’s been tested in the field (controversially), and the testing has also not turned up any problems.

When emerging technologies are put to use, though, experts’ best-available assurances don’t always allay concerns. The objections can begin to seem unmovable. Such cases raise questions not just about the facts on the ground but about what’s going on inside the objections, as it were.

A large literature has accumulated around this question. A long series of studies on the “perception of risk” suggests, for example, that the popular assessment of risks depends not merely on the extent or probability of harm to life or health, but also on such qualities as whether the risk is hidden, is unfamiliar, can be controlled, or arouses “dread,” as the psychologist Paul Slovic has put it. These qualities are connected to how people feel about the interventions and suggest that the objections are partially detached from the facts: they are partly a matter of feeling. Genetic modifications rank highly on such “psychometric” scales, which helps explain the reaction to genetically modified mosquitoes.

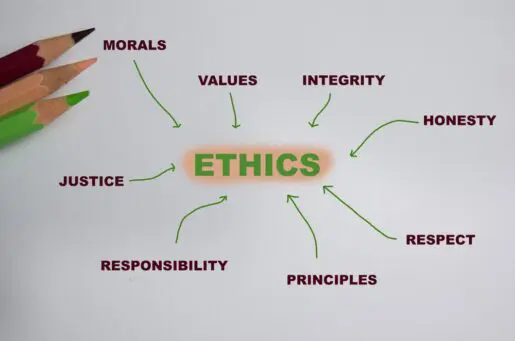

What to do with this information is very difficult, however. Are “dread” and other emotional aspects of risk perception irrational considerations that should be ignored? Or are they meaningful, important aspects of “harm”?

Scholars doing work on risk perception and risk analysis tend to opt for tossing out the emotional aspects of harm. Sometimes, they are surely right, and certain “framing effects” are maybe the best example of how our emotions can seem to get in the way. As a study by Amos Tversky and Daniel Kahneman famously showed, we seem to be more drawn toward a treatment if it is described (“framed”) as saving 200 of 600 people rather than as allowing 400 of 600 people to die. The language of saving people seems to trigger a warm glow that causes us to see the treatment differently than we do if the same treatment is framed in terms of loss. Many, many other studies have also found framing effects. (Here’s one striking example: a disease that kills 1,286 people out of every 10,000 seems worse than one that kills 24.14 percent of the population, according to a study by Kimihiko Yamagishi.)

But there are also reasons to think that some of the emotional aspects of risk perception need to be taken into account in decision-making about risk. Insofar as the perception of risk is the estimation of possible bad things, it is fundamentally a matter of values, and values are at least closely connected to feelings and perhaps rest fundamentally on feelings (or “sentiments,” a philosopher might say). To evaluate a risk — to bring out the values associated with it — is partly a matter of paying attention to how people feel about it, then. Indeed, if a “reasonable person” (a fictional character who regularly figures in legal standards) is somebody who shares a society’s basic values, then emotional responses are actually part and parcel of rationality. From this perspective, it may well justifiably matter not only that we might die but also how we might die. As the risk analysis scholarAdam Finkel has put it, a comparatively quick death in an automobile accident may seem preferable to a terror-filled death in an airplane accident, justifiably leading people to prefer a somewhat larger risk of the former over a miniscule risk of the latter. In short, perhaps risk analysis should at least sometimes pay attention to dread.

Recognizing that risk analysis is a question of values also suggests that decision-making about projects such as genetically modifying mosquitoes should also be influenced by concerns that might be matters of feeling. Questions about who bears the risks and whether the risks are dealt out fairly may be vitally important, for example. There are also broader questions about the human relationship to nature. If we think that the human relationship to the natural world is that of a gardener to an unruly plot of land, then we may give wider latitude to the manipulation of wild populations. but if we take a more preservationist stance — if we hold that humans ought to some degree to preserve the world as we find it — then we may opt to be more restrictive.

This line of thought leads to really big questions. Should there be mosquitoes — at all? They’re an enormous health threat even where they’re native, and they’re a nuisance even when they’re not a health threat. And while we’re contemplating the elimination of living species, what about the recreation of extinct ones? It’s too bad that we apparently killed off the wooly mammoth, and seeing one again, as some ethicists argued inSciencein 2013, would be quite cool.

The questions are also exceedingly complex. Opting for a preservationist stance toward nature, for example, does not necessarily give reason to entirely rule out the use of genetic interventions in wild populations. Since A. aegypti thrives in Key West because of human intervention in the first place, a very safe mechanism for removing it from that location might actually serve preservationist goals. A still stronger case could be made for an intervention that eliminated the wooly adelgid from the eastern United States, where the insect has largely eliminated the native eastern hemlock. A preservationist case could also be made for a genetic intervention that allowed the American chestnut to withstand chestnut blight — an intervention that is currently under way and that, if successful, would count as a virtual de-extinction event.

As the technological possibilities come into view, the moral complexity may be hard to keep in mind. We want to stay true to our values. That can seem to mean we should be firm and consistent, and firmness and consistency can seem to mean simple, toe-the-line positions — “principled,” “rational” answers. But moral rationality is not mathematical rationality, and careful attention to our emotional reactions will be necessary.

Gregory E. Kaebnick is a research scholar at The Hastings Center and the editor of the Hastings Center Report. He is the author of Humans in Nature: The World as We Find It and the World as We Create It. (Oxford University Press 2013).

Posted by Greg Kaebnick at 02/20/2015 01:28:08 PM |